Overview

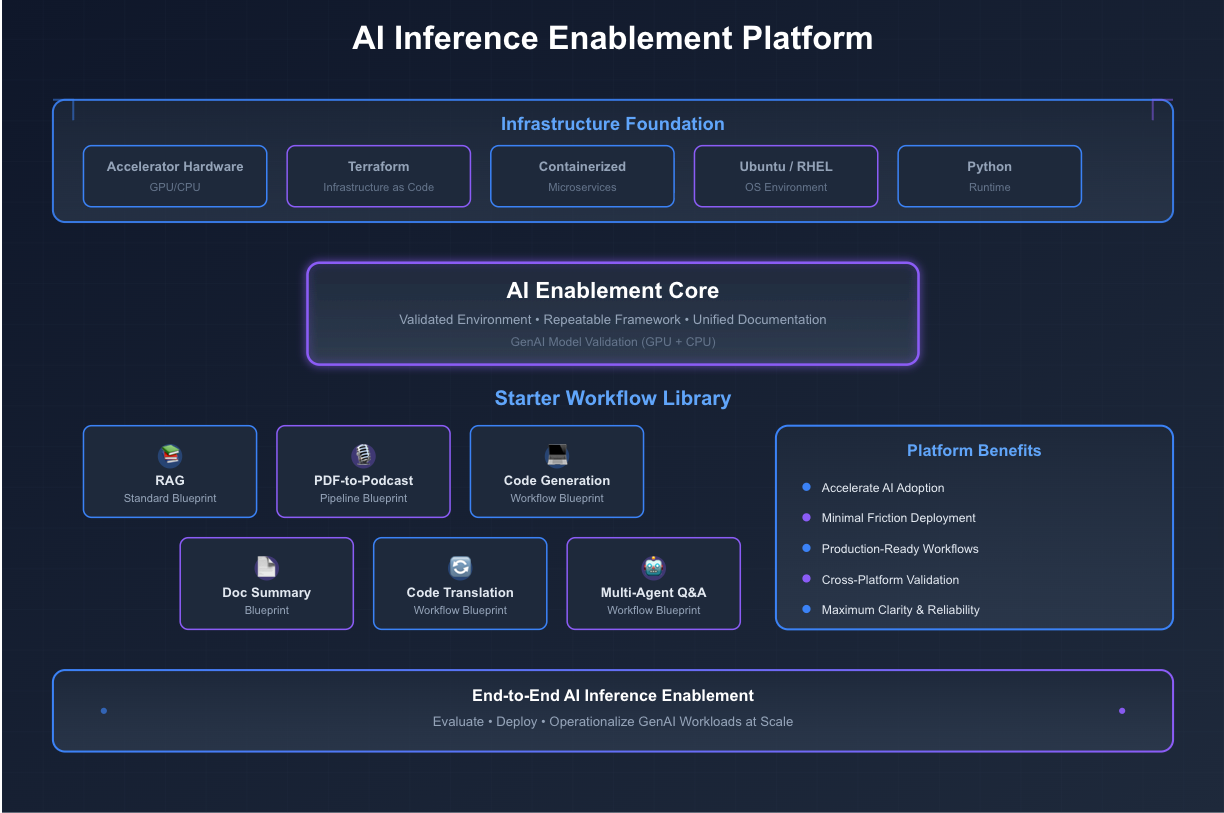

We delivered an end-to-end AI Inference Enablement Platform tailored for on-premise environments, enabling organizations to evaluate, deploy, and operationalize GenAI workloads on both CPU-based systems and high-performance accelerator hardware. The platform supports on-prem GenAI inference by simplifying pre-deployment decision-making, streamlining post-deployment onboarding, and providing ready-to-run workflows and blueprints that demonstrate practical, production-ready AI capabilities.

Technology Stack

● Accelerator-enabled single-node compute environment

● Terraform (Infrastructure as Code)

● Containerized AI microservices

● Ubuntu and RHEL operating systems

● Python-based AI workloads

Details

Inference-First Platform for Enterprise GenAI

Cloud2 Labs delivered a comprehensive AI inference enablement solution designed to help teams adopt GenAI workloads efficiently, reliably, and at scale. The solution provides:

- A validated environment for running AI inference workloads on accelerator-enabled hardware

- Validation of a set of GenAI models on both GPU and CPU, ensuring performance

and compatibility across platforms - A repeatable installation and configuration framework that simplifies deployment

for both Enterprise Inference components and GenAI models.

Production-Ready AI Blueprints, Not Demos

A library of starter workflows showcasing end-to-end AI applications, including:

- Standard RAG (Retrieval-Augmented Generation) blueprint

- Code generation workflow blueprint

- Document summarization AI blueprint

- Code translation AI workflow blueprint

- Multi-Agent Q&A workflow blueprint adapted for the validated GenAI models

- Hybrid search blueprint

- Document generation blueprint

- Unified AI documentation covering deployment, usage, and customization

This delivery now serves as a core reference platform, enabling organizations to accelerate AI adoption with minimal friction and maximum clarity.