Agentic AI Design: Do Agents Really Need to Communicate?

The rapid evolution of generative AI systems has led to a new paradigm in artificial intelligence known as Agentic AI. Unlike traditional AI models that respond to prompts, agentic AI systems are built around autonomous AI agents capable of reasoning, planning, and executing tasks independently.

These LLM-powered agents can interact with APIs, enterprise tools, and knowledge bases to automate complex workflows. As organizations move toward AI-driven workflow automation, many systems are now being designed as multi-agent architectures, where multiple specialized agents collaborate to complete tasks.

However, a key design question arises:

Do AI agents really need to communicate with each other?

At first glance, communication seems essential. After all, human teams rely heavily on collaboration and discussion. But in enterprise AI architecture, excessive agent-to-agent communication can introduce complexity, latency, and system fragility.

In many modern agentic AI designs, communication between agents is minimized or replaced with orchestration mechanisms.

Understanding when agents should communicate—and when they should not—is critical for building scalable, reliable AI systems.

Understanding Agentic AI Architecture

Agentic AI architecture refers to systems where intelligent agents act autonomously to achieve defined goals.

An AI agent typically performs several core functions:

- Perceiving data from the environment

- Reasoning using large language models (LLMs)

- Planning multi-step actions

- Interacting with external systems and tools

- Evaluating results and adapting behavior

These agents function as autonomous decision systems capable of executing complex workflows across enterprise infrastructure.

Typical use cases include:

- Enterprise workflow automation

- IT operations automation

- Data analysis and reporting

- Customer service automation

- Compliance monitoring

In these environments, agents serve as intelligent intermediaries between AI models and enterprise systems.

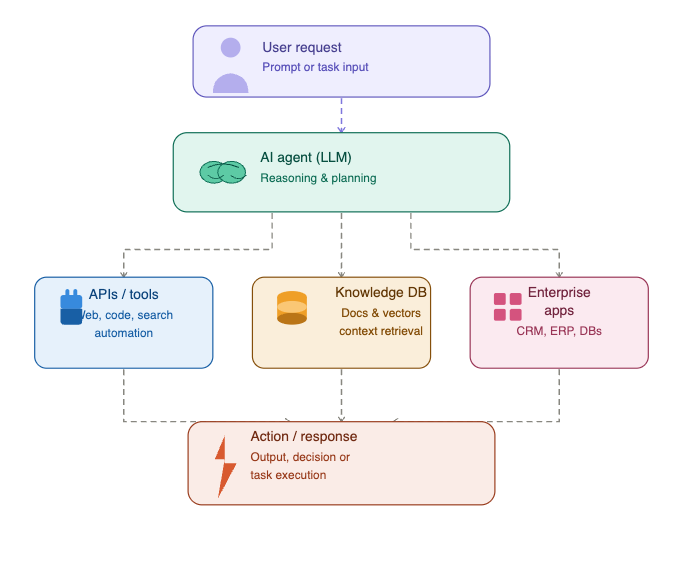

Core Architecture of an AI Agent

Most LLM-powered agents operate within a structured architecture that connects reasoning models with enterprise tools.

Below is a simplified representation of basic agentic AI architecture.

Diagram: Basic Agentic AI Architecture

In this architecture, the LLM acts as the reasoning engine, while tools and APIs provide access to enterprise data and functionality.

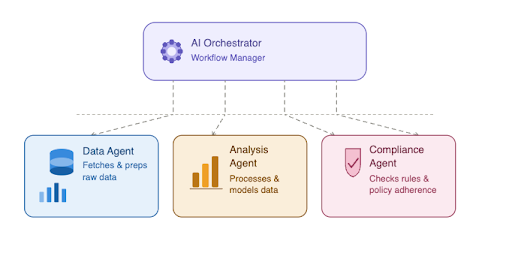

Multi-Agent Systems in Enterprise AI

As workflows become more complex, organizations often introduce multi-agent systems.

Instead of relying on one agent to perform every task, the system includes several specialized agents, each responsible for a specific capability.

For example:

- Data Agent – retrieves information from databases and APIs

- Analysis Agent – performs reasoning and generates insights

- Validation Agent – checks compliance rules or policies

- Execution Agent – performs operational actions

This modular structure improves scalability and allows organizations to design distributed AI systems where agents operate independently.

However, once multiple agents are introduced, coordination becomes a critical design challenge.

Multi-Agent Communication Model

In multi-agent AI systems, communication defines how agents coordinate tasks, share information, and contribute to a common goal. While early implementations relied on simple message passing, modern agentic AI design uses more structured approaches to ensure reliability and scalability.

As multi-agent systems evolve, engineering teams are introducing standardized interaction patterns that define how agents exchange data and trigger actions. These patterns are often referred to as agent-to-agent (A2A) communication models.

Structured Communication in Multi-Agent Systems

Instead of allowing unrestricted communication between agents, modern architectures define clear interaction rules. This ensures that autonomous AI agents operate predictably within complex workflows.

These structured communication approaches are especially important in:

- enterprise AI systems

- AI workflow automation

- distributed AI systems

- LLM-powered agents

Common A2A Communication Patterns

Below are widely adopted communication patterns used in agentic AI architecture, rewritten in a simplified and practical way:

1. Instruction-Based Messaging

Agents exchange well-defined instructions rather than free-form conversations.

Each interaction typically includes:

- a specific task

- required input data

- expected output format

This approach reduces ambiguity and ensures that AI agents communication remains consistent across workflows.

2. Delegation-Driven Interaction

Some agents act as coordinators, assigning tasks to specialized agents.

For example:

- a planning agent breaks down a workflow

- assigns tasks to execution or analysis agents

- collects results for final output

This model is commonly used in AI orchestration architecture and helps maintain clear separation of responsibilities.

3. Shared State Coordination

In many modern systems, agents do not communicate directly. Instead, they interact through a shared state layer.

This may include:

- workflow databases

- memory layers

- structured data stores

Agents read from and update this shared state, ensuring consistency without continuous back-and-forth messaging. This pattern is highly effective in enterprise AI systems where scalability is critical.

4. Event-Based Triggering

Agents respond to events rather than direct requests.

Example:

- one agent completes a task and updates system state

- another agent detects the update and initiates the next step

This pattern is widely used in AI workflow automation and allows systems to scale efficiently without tight coupling between agents.

5. Schema-Guided Interactions

Modern agentic systems increasingly rely on predefined schemas that define how agents interact.

These schemas specify:

- input structures

- output formats

- validation rules

By enforcing structured communication, organizations can improve the reliability of LLM-powered agents and reduce unexpected behavior.

Why Structured Communication Matters

Unstructured communication between agents can quickly lead to:

- increased latency

- inconsistent outputs

- difficult debugging

- fragile system design

By introducing structured A2A interaction models, teams can build scalable and reliable multi-agent systems that perform consistently in production environments.

Balancing Communication and System Design

Although communication is an essential part of agentic AI architecture, excessive interaction between agents can negatively impact performance.

This is why many enterprise AI systems combine:

- limited agent-to-agent communication

- centralized orchestration layers

- shared workflow state

This hybrid approach ensures that systems remain efficient while still enabling collaboration when necessary.

Many early multi-agent AI systems relied heavily on direct communication between agents.The following diagram illustrates this architecture.

Diagram: Multi-Agent Communication Architecture

In this model, agents share information and coordinate tasks through agent-to-agent communication.

While this design enables collaboration, it also introduces several architectural challenges.

Challenges of Agent Communication

Latency

Every communication step adds processing overhead. In systems with multiple agents exchanging messages, latency can accumulate quickly.

Coordination Complexity

Agents must maintain shared context about the workflow. Synchronizing state across distributed agents can be difficult.

Error Propagation

Failures in one agent may disrupt downstream agents that depend on its outputs.Failures in one agent may disrupt downstream agents that depend on its outputs.

Observability Challenges

Tracking interactions across multiple communicating agents can make debugging complex.

Because of these challenges, many modern enterprise AI systems aim to reduce direct agent communication.

When AI Agents Actually Need Communication

Despite its drawbacks, agent communication is still necessary in some scenarios.

Distributed Problem Solving

Some problems require multiple specialized agents to contribute unique capabilities.

For example:

- A compliance agent interprets regulations

- A financial agent evaluates transactions

- A reporting agent generates documentation

These agents must exchange information to produce accurate outcomes.

Parallel Task Execution

In some workflows, tasks must run simultaneously.

Example:

- One agent retrieves customer data

- Another analyzes product usage

- The third generates recommendations

Communication is needed to combine results into a final output.

Negotiation-Based Systems

Certain distributed AI environments involve agents negotiating decisions, such as:

- Supply chain optimization

- Resource scheduling

- Autonomous infrastructure management

These systems depend on structured communication between agents.

Orchestrated Agent Architecture (Enterprise Model)

Most enterprise AI platforms now prefer orchestrated agent architectures.

Instead of agents communicating directly, a central orchestrator manages workflow coordination.

Diagram: AI Orchestrator Architecture

This architecture provides several benefits:

- Simplified system design

- Centralized workflow control

- Improved monitoring and observability

- Reduced communication overhead

Because of these advantages, AI orchestration architectures are becoming the standard for enterprise agentic AI systems.

Shared State Architectures

Another design pattern replaces messaging with shared workflow state.

In this model, agents interact with a shared data layer instead of communicating directly.

Agents read and write information to:

- Workflow state databases

- Shared memory layers

- Task queues

This approach ensures that all agents operate on consistent information while minimizing communication complexity.

When a Single Agent Is Enough

nterestingly, many use cases do not require multi-agent systems at all.

Modern LLM-powered agents can:

- Call multiple APIs

- Manage multi-step workflows

- Analyze intermediate results

- Dynamically adjust plans

In such cases, a single intelligent agent can orchestrate tasks internally without requiring collaboration between multiple agents.

For smaller or moderately complex workflows, this approach often produces simpler and more reliable systems.

Agent Frameworks Supporting Agentic AI

Several frameworks are helping developers build agentic AI architectures.

Examples include:

LangChain Agents

LangChain enables developers to build LLM-powered agents that interact with tools and APIs.

AutoGen

AutoGen supports multi-agent collaboration frameworks, enabling agents to communicate and coordinate tasks.

CrewAI

CrewAI focuses on structured multi-agent workflow orchestration.

Semantic Kernel

Semantic Kernel provides tools for integrating AI agents with enterprise applications.

These frameworks enable organizations to design scalable AI agent ecosystems while maintaining control over communication and coordination.

Best Practices for Agentic AI Design

Successful agentic AI systems follow several key design principles.

Keep Agents Specialized

Each agent should perform a clearly defined task.

Minimize Communication

Avoid unnecessary messaging between agents to reduce latency and complexity.

Use Orchestration

Introduce orchestration layers to coordinate workflows efficiently.

Ensure Observability

Implement monitoring tools that track agent actions and system behavior.

These practices help organizations build robust AI infrastructure capable of supporting enterprise-scale automation.

Conclusion

Agentic AI represents a major shift in how intelligent systems are designed. By combining LLM-powered reasoning with autonomous execution, organizations can automate increasingly complex workflows.

However, the introduction of multi-agent systems raises important architectural questions—particularly around communication.

While agent-to-agent communication can enable collaboration and distributed problem solving, it also introduces latency, coordination challenges, and system complexity.

For this reason, many modern enterprise AI architectures favor orchestration-based designs, where agents operate independently and coordination happens through centralized workflow management.

Ultimately, the most effective agentic AI systems are not those with the most agents communicating with each other, but those designed with clear architecture, efficient coordination, and minimal unnecessary interaction.

As enterprise AI adoption accelerates, organizations that focus on scalable agent orchestration, robust AI infrastructure, and well-designed agent ecosystems will unlock the full potential of intelligent automation.

Agentic AI Architecture: Frequently Asked Questions

1.What is Agentic AI?

Agentic AI is often powered by large language models (LLMs) combined with orchestration layers, memory systems, and tool integrations. These agents can perform tasks such as data analysis, workflow automation, and decision support in enterprise environments.

2. What are AI agents in agentic AI systems?

In agentic AI architectures, agents typically:

Gather data from APIs or databases

Reason using LLM-powered models

Plan multi-step actions

Interact with enterprise systems

Evaluate results and adjust strategies

These capabilities allow agents to function as autonomous decision systems within modern AI infrastructure.

3. Do AI agents need to communicate with each other?

While multi-agent systems sometimes require communication for collaboration or distributed problem solving, many enterprise agentic AI architectures minimize direct agent communication.

Instead, systems often use:

AI orchestration layers

shared workflow state

centralized task managers

These approaches reduce system complexity while maintaining efficient coordination between agents.

4. What is the difference between single-agent and multi-agent AI systems?

A multi-agent system distributes tasks across several specialized agents, such as:

Data agents

Analysis agents

Validation agents

Execution agents

Multi-agent systems improve scalability and specialization but may require coordination mechanisms like agent communication or orchestration frameworks.

5. What are the benefits of AI orchestration in agentic systems?

Benefits include:

Centralized coordination of agents

Improved observability and monitoring

Reduced communication complexity

Better scalability for enterprise AI systems

This approach is widely used in enterprise AI architectures because it enables reliable automation across complex workflows.